For most parents, school still feels familiar.

Homework gets done. Grades come back. Teachers evaluate work. Progress is measured. The system may not be perfect, but it feels stable. Predictable. Understandable.

That sense of stability is fading.

Not because students suddenly became dishonest. Not because teachers stopped caring. But because a quiet assumption that held the entire system together is breaking:

That submitted work reflects student effort.

Generative AI didn’t invent cheating. What it changed is something far more destabilizing — how easily work can be outsourced, and how difficult it is to tell when it happens. As that line blurs, trust begins to fail. And when trust fails, grades stop meaning what parents think they mean.

🎧 Listen Instead (Audio Version)

Audio summary: “When Grades Stop Meaning What You Think” — format: MP4 audio.

What’s Actually Changing

AI-assisted cheating is not one behavior. It’s a range of behaviors that live in the space between “help” and misrepresentation.

Some students use AI to brainstorm ideas or clean up grammar. In some classrooms, that’s allowed. In others, it isn’t. At the far end of the spectrum are students submitting essays, problem sets, or code that were generated almost entirely by a machine, with little understanding of the content.

Most schools now rely on a simple rule: if AI performs the intellectual work being assessed, and that use isn’t explicitly permitted, it counts as cheating.

The rule is easy to state.

Enforcing it is not.

How AI Cheating Actually Works Now

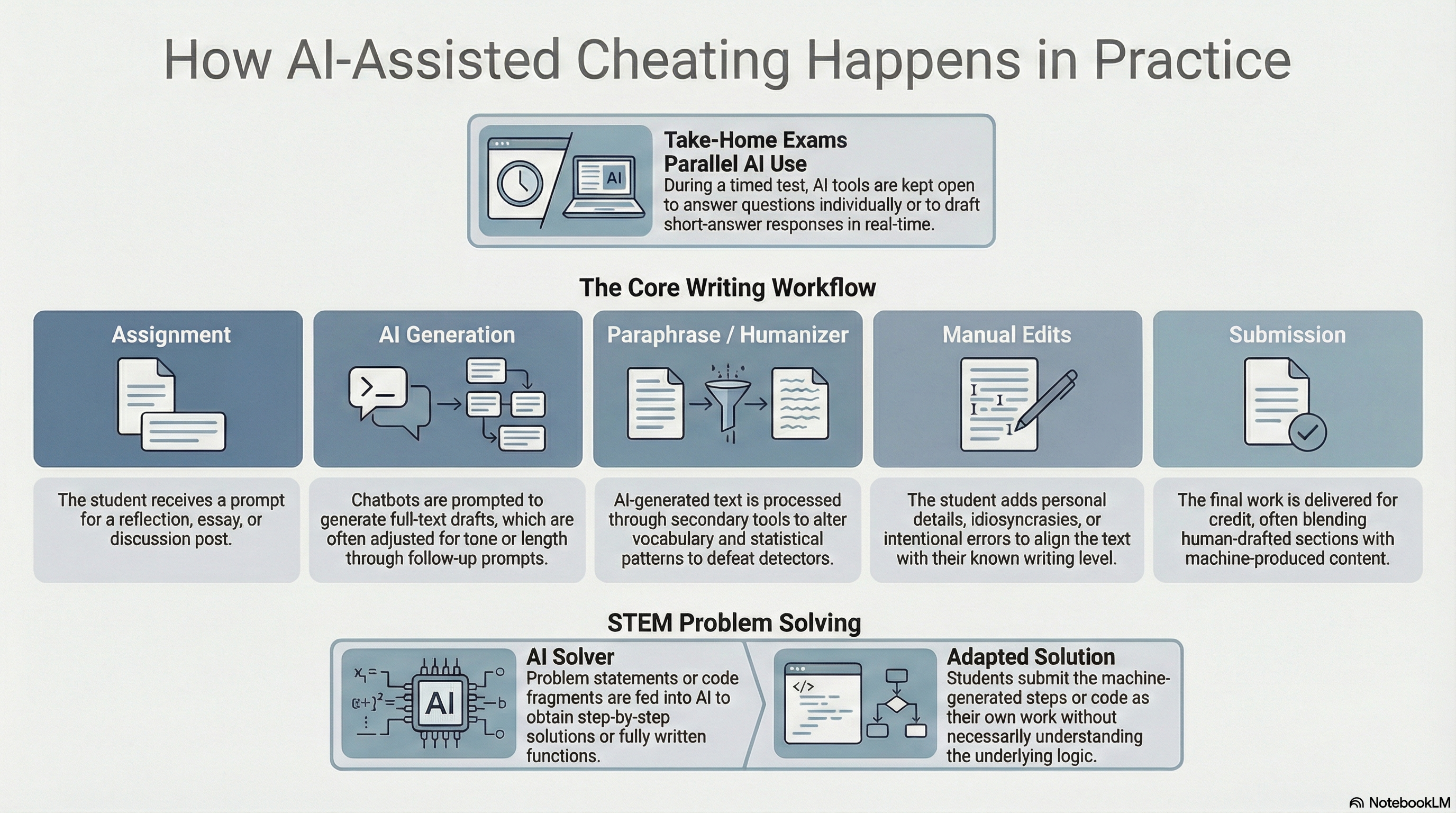

Early concern focused on copy-and-paste abuse. That still happens, but it’s no longer the main problem.

Today’s workflows are designed to pass unnoticed.

Students generate full drafts using AI, then lightly edit them to match their usual tone. In math, science, and computer science, AI tools provide step-by-step solutions that bypass the struggle required to learn the material. During take-home exams, AI runs quietly alongside the test, answering questions in real time.

To avoid detection, AI-generated text is often passed through paraphrasing tools or manually altered. Personal details are added. Small errors are inserted on purpose. Human-written paragraphs are blended with machine-generated ones. In disputed cases, students may even recreate drafts or writing histories to simulate effort.

This is not reckless behavior. It is adaptive behavior inside a system where enforcement is uneven and detection is fragile.

Why This Isn’t Just “Cheating Like Before”

Cheating has always existed. AI changes the equation in three important ways.

First, scale. Many students can now cheat at the same time, cheaply and quietly.

Second, speed. Entire assignments can be completed in minutes instead of hours.

Third, opacity. AI output is original text. There is no copied source to trace.

Academic integrity once relied on the idea that cheating left evidence. AI undermines that assumption. When misconduct becomes difficult to prove, honesty stops being reliably rewarded.

That is the shift happening now.

How Widespread Is This?

No one has a clean number — and that uncertainty matters.

Surveys consistently show that AI use among students is widespread. Most students describe it as “help,” not cheating. A growing share admit to using it to complete entire assignments. In K–12 settings, the line is often unclear because policies lag behind practice. In higher education, autonomy and unsupervised work increase opportunity.

Detection tools cannot resolve the ambiguity. Self-reporting is unreliable.

The system is operating with incomplete visibility, and that alone creates instability.

How Schools Are Trying to Respond

Institutions are responding, but not in a unified way.

Academic integrity policies are being rewritten to explicitly mention generative AI. Many courses now specify whether AI is prohibited, partially allowed, or allowed with disclosure. These rules often vary by instructor, even within the same school.

At the same time, educators are redesigning assessments. In-class writing, oral exams, draft-based grading, and assignments tied to personal experience are becoming more common. These approaches reduce AI misuse, but they also reduce flexibility and scale.

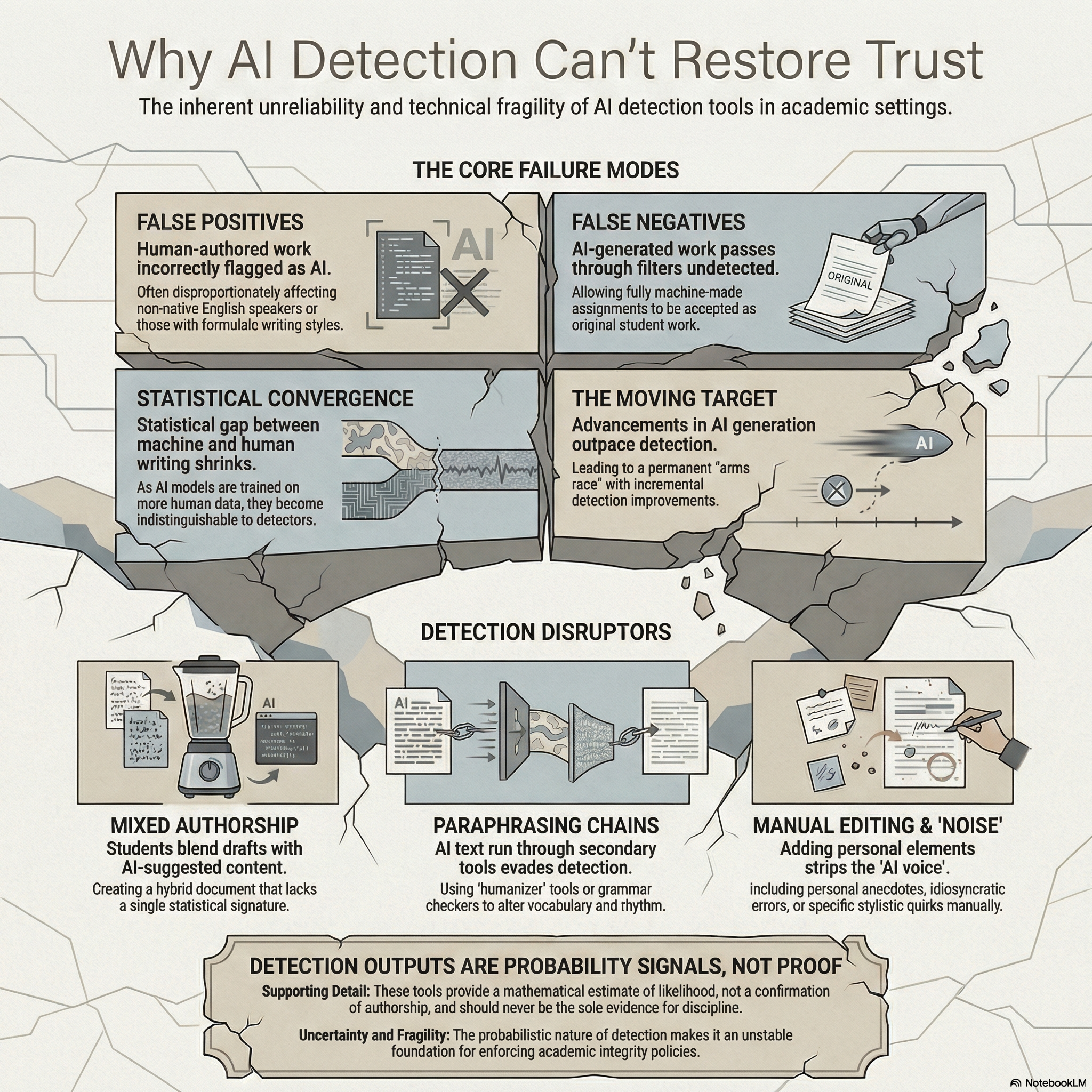

Detection tools are widely deployed, but quietly mistrusted.

They produce probability scores, not proof. They sometimes flag honest students and miss carefully edited AI work. Because of this, many institutions advise instructors not to rely on detection alone.

This leaves everyone in an uncomfortable position.

The Trust Failure

This is where the damage accumulates.

Honest students worry about being suspected. Students who cheat learn how to blend in. Teachers are pushed into investigative roles they never wanted. Grades lose their signaling value.

When enforcement becomes unreliable, effort stops feeling safe.

Trust erodes slowly — and then all at once.

What Parents Can Still Control

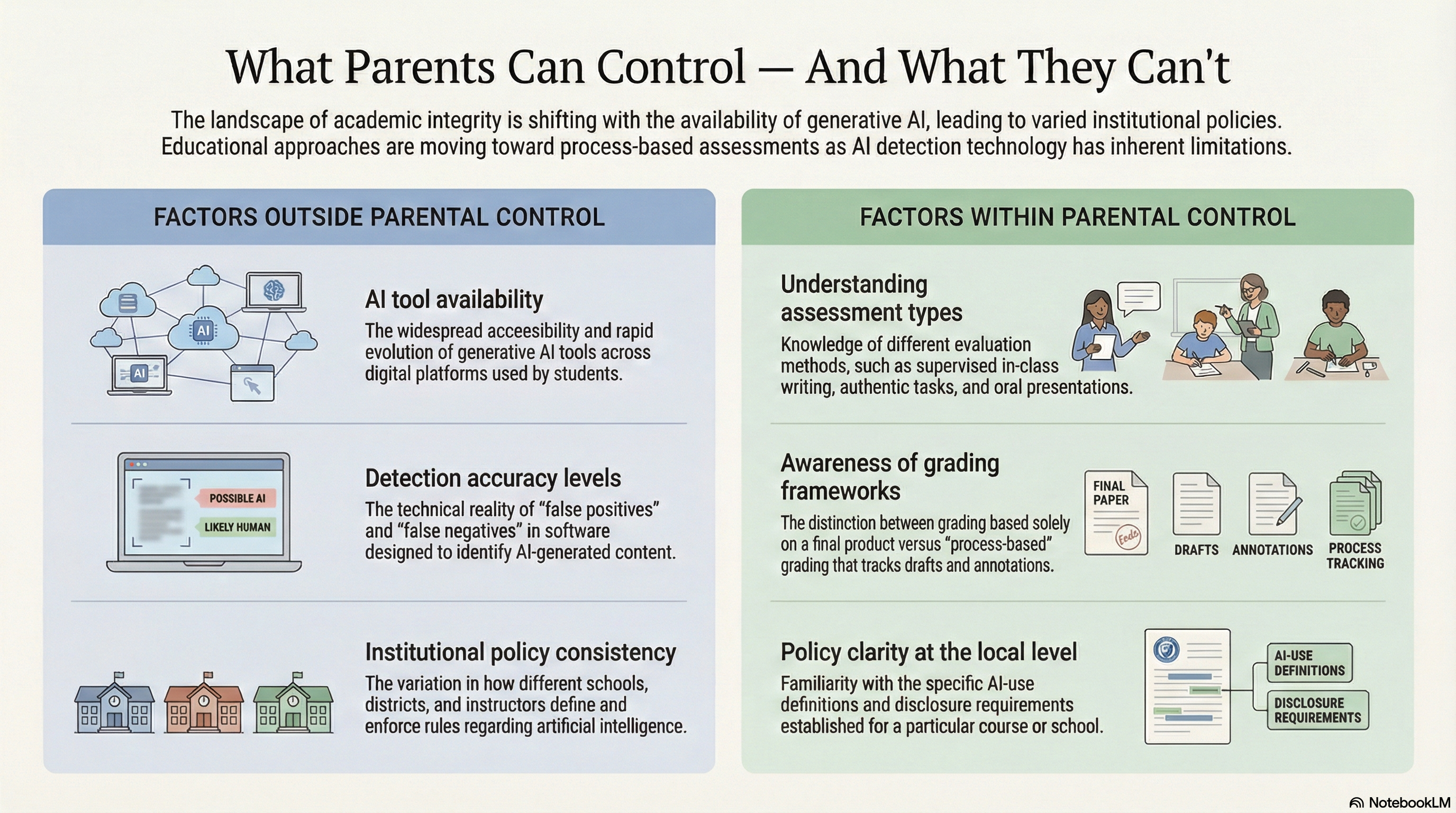

Parents cannot control whether AI exists, how accurate detection tools are, or how consistent institutional policies will be.

What they can control is understanding how learning is measured. Whether grades reflect process or just output. Whether expectations are clear or ambiguous. Whether a school adapts thoughtfully or relies on brittle enforcement.

Those distinctions matter now more than ever.

What We Know — and What We Don’t

We can say with confidence that generative AI is now embedded in student workflows, that unauthorized use is being formally classified as cheating, that detection tools are unreliable as sole enforcement mechanisms, and that assessment redesign is currently the strongest mitigation.

What remains uncertain is how widespread serious misuse truly is, how long-term learning will be affected, whether detection can ever keep pace with generation, and how equity will shift for students who rely on AI for access.

The system has not collapsed.

But it is bending.

And the question families now face is not whether AI belongs in education.

It is whether trust can survive without change.